Internal vs. External Validity: Why Your Internal Linking Tests Keep Giving You Wrong Answers

Your internal-linking test is probably telling you the wrong story. Not because the data is bad, but because two different questions, “did my change cause this?” and “will this work somewhere else?”, get treated as one. They aren’t. Internal validity asks whether your test actually measures what you think it measures, whether the ranking lift came from your link adjustments or from algorithm updates, seasonality, or competitor moves. External validity asks whether the result generalizes, whether the same pattern holds across other site sections, industries, or content types. Conflate them and you’ll either declare victory on noise, or, just as often, kill a strategy that worked once and would have worked again if you’d known where to test it next.

What Internal Validity Actually Measures

Internal validity asks one question: can you confidently say your internal linking changes caused the ranking movement you observed, or might something else explain it? In SEO experiments (and frankly in any quasi-experimental setting where you don’t get to randomize), this distinction matters because Google’s algorithm responds to hundreds of variables simultaneously. Content updates, competitor activity, seasonal trends, technical changes, and core updates all create noise that can mask or mimic the effect of your link modifications.

Quick vocabulary

- Internal validity

- The degree to which the observed effect can be attributed to the intervention rather than to a confound. “Did my change cause this?”

- External validity

- The degree to which the finding generalizes beyond the specific sample, time, and conditions of the test. “Will this work somewhere else?”

- Selection bias

- When test and control pages differ systematically before the intervention, the difference you measure afterward is contaminated.

- Regression to the mean

- Pages chosen because they’re underperforming will tend to drift back toward their average regardless of any intervention. The “fix” gets the credit anyway.

- Hawthorne effect

- In SEO terms, the version where pages you’re actively watching get touched, refreshed, or crawled more often than the pages you ignore. Attention itself is a confound.

- Control variable

- A factor held constant (or matched between cohorts) so it can’t explain the observed difference. Content length, page age, and source authority are the usual suspects.

The difference between causation and correlation becomes critical during test design and interpretation. If you add internal links to ten pages and see rankings improve, correlation exists, but did the links cause the improvement? Without controlling for confounding variables, you cannot know. Strong internal validity means isolating your variable through techniques like control groups, staggered rollouts, or A/B testing that rule out alternative explanations.

Low internal validity produces misleading conclusions. You might credit internal links for a ranking boost that actually came from a sitewide technical fix, or, more painfully, dismiss a genuinely effective strategy because an algorithm update masked its impact. High internal validity gives you clean signal: when rankings move, you know why, which means you can replicate successful tactics and stop burning resources on coincidental correlations.

Pro tip

Before you run the test, write down the result that would disconfirm your hypothesis. If you can’t articulate what failure looks like, internal validity is going to be hard to claim afterward, because every outcome will look like confirmation.

What External Validity Actually Measures

External validity asks a deceptively simple question: will the internal linking pattern that boosted rankings on your e-commerce site also work for a SaaS blog, a local business directory, or your client’s site next quarter? It measures generalizability, whether your findings transfer across different contexts, populations, and conditions.

A test with strong external validity produces insights that hold true beyond the narrow circumstances where you first observed them. Say you discover that adding contextual links in the first 200 words improves rankings, and that pattern repeats across ten different sites in three industries over six months. That’s a finding that travels well.

A clean result on one site is a hypothesis. The same result on five different sites is starting to look like a principle.

Weak external validity means your result was site-specific, time-bound, or niche-dependent. Perhaps your internal linking wins only worked because your site had unusually high domain authority, or because you tested during a core algorithm update, or because your industry has unique user behavior patterns. The mechanics succeeded once but don’t replicate elsewhere.

This matters because most SEOs want portable strategies, not one-off flukes. If your test only works under the exact conditions where you ran it, you’ve learned something interesting about that particular site but nothing actionable for your next project. External validity determines whether you’ve found a tactic or uncovered a principle, and that distinction shapes every recommendation you make to stakeholders. Honestly, this is where most published “case studies” quietly fall apart. The numbers are real. The generality is wishful thinking.

The Trade-Off SEOs Miss

Here’s the thing, you can’t optimize for both perfectly. Every SEO experiment forces a choice.

Highly controlled tests give you clean answers. Change one variable, say, exact-match anchor text versus branded anchors, while holding everything else constant. Identical test pages, identical link placements, identical content length. You’ll know with reasonable confidence what caused any ranking change. That’s strong internal validity.

But those artificial conditions create a problem. Real websites don’t have perfectly matched pages. Real link profiles mix anchor types naturally. Real content varies in quality, length, and topic coverage. Your controlled test might prove that exact-match anchors work in a lab setting, but tells you nothing about whether they’ll work on your messy, real client site. That’s weak external validity.

| Design choice | High internal, low external | Balanced two-phase design |

|---|---|---|

| Sample | A handful of carefully matched pages on one site | Initial control on one site, then replication across page types, sections, and properties |

| Variable count | One variable changed, everything else frozen | One variable in phase 1, then deliberate variation of secondary factors in phase 2 |

| Page selection | Artificially similar pages, length, age, topic, authority all clamped | Matched within phase 1, deliberately varied within phase 2 |

| What you learn | Whether the effect can exist under ideal conditions | Whether the effect exists and where its boundary conditions sit |

| Failure mode | Over-generalizing a result that only held in the lab | Slower to a conclusion, harder to summarize for stakeholders |

Now flip the scenario. Test on live client sites with actual traffic, existing link profiles, and all their glorious complexity. Now you’re measuring what actually happens in the wild, strong external validity. But when rankings change, was it your new internal linking structure? The algorithm update that happened mid-test? Seasonal search trends? Competitor activity? Good luck isolating cause from noise. Weak internal validity.

The solution isn’t trying to have both. It’s choosing deliberately based on what you need to learn. Testing a completely new hypothesis? Start with controlled conditions to establish if the effect exists at all. Validating whether a proven tactic works in your specific context? Accept the mess and test in the wild. Match your validity priorities to your actual question, not to some imagined perfect study.

Case Study: When Internal Validity Breaks Down

The Setup

A mid-sized e-commerce site ran a 90-day internal linking experiment, adding contextual links from high-authority category pages to underperforming product pages. Traffic to target pages increased 34%, and the team declared victory. But the interpretation proved premature. During the same period, a major competitor had shut down, shifting market share across the industry. The traffic bump reflected external market conditions, not the linking strategy. When the team rolled out the same approach to a second product category three months later, results were flat. This case reveals why distinguishing internal validity (did our intervention cause the observed effect?) from external validity (will this work elsewhere?) matters for experiment design and business decisions.

Watch for

Selecting your test pages because they’re underperforming is one of the cleanest paths to a false-positive read. Underperformers regress toward the mean on their own. Use a matched-control group drawn from the same performance band, not from your top performers, or the lift you measure is partly just statistical gravity.

What Actually Happened

The team discovered that the traffic gains weren’t caused by the internal linking changes alone. Or, more accurately, weren’t caused by them at all. A technical audit revealed that the site had been gradually recovering from a previous penalty during the same test period. The recovery timeline coincided almost perfectly with the link optimization rollout, making it appear that internal links drove the improvement. Further investigation showed that competitor sites with no internal linking changes experienced similar upward trends during the same window, suggesting broader algorithm updates were the actual driver. The confounding variable was identified by comparing the test site’s performance against a control group of similar sites and checking Google Search Console data for manual action removals that had been implemented weeks before the internal linking experiment began.

What This Teaches Us

Prioritize controlled variables: isolate one change at a time in your link tests, document competing factors like algorithm updates or seasonal traffic shifts, and run experiments long enough to capture representative user behavior. Before concluding that internal links drove a ranking change, audit for confounds: new content published, technical fixes deployed, competitor movements. Use comparison groups when possible, test pages receiving new links versus structurally similar pages that don’t. Track leading indicators beyond rankings: click-through rates from source pages, time-on-site patterns, conversion paths. Build a pre-registration habit: write down your hypothesis, success metrics, and analysis plan before launching tests. This prevents post-hoc rationalization and strengthens your ability to distinguish signal from noise, making your link strategy decisions defensible and repeatable across campaigns.

Case Study: When External Validity Fails

The Original Success

An e-commerce site added 50 internal links from high-authority category pages to underperforming product pages, then measured a 34% increase in organic traffic to those products over eight weeks. The test appeared definitive: internal links drove rankings. The team had carefully controlled for seasonality by comparing against a holdout group of similar products that received no new links. Traffic graphs showed clean separation between the two groups starting exactly when links went live, and the effect persisted through two full business cycles. Leadership approved rolling out the strategy across 2,000 additional products, confident the method would scale based on the strength of these initial results.

The Replication Failure

Then the team attempted to replicate their internal linking wins across the company’s international properties. Conversion lifts vanished. The culprit, predictably enough, was external factors they hadn’t controlled for. Regional sites used different content management systems, had varying content quality baselines, and faced distinct competitive landscapes. What worked on the flagship U.S. site, where content was dense and well-structured, flopped on newer European properties with sparse internal linking infrastructure. The original test had high internal validity (the changes genuinely caused the observed effect) but poor external validity (the findings didn’t generalize beyond specific conditions). Key differences included site authority, existing link depth, and user search behavior patterns that varied by market.

Why Context Mattered

Success hinged on matching the test environment to real conditions. The team that embedded links in genuinely relevant anchor text within high-quality content saw sustained traffic gains, their tight causal control (internal validity) operated within realistic conditions (external validity). Conversely, the artificial footer-injection test produced clean data but worthless insights because no legitimate site would replicate those conditions. The key variable: whether the experimental setup could plausibly exist in production without triggering algorithmic red flags. Tests that prioritized one validity type while ignoring the other consistently failed to generate actionable intelligence for actual deployment.

Note

External-validity failures are usually invisible until you try to scale. That’s why a deliberate phase-2 replication step, even on just two or three other pages or sections, pays for itself the first time it stops a flawed rollout. Ahrefs’ own writing on SEO experimentation hits the same note: a single positive site-level result is the start of the investigation, not the end of it.

How to Strengthen Internal Validity in Your Tests

Reducing confounding variables requires deliberate design choices before, during, and after your internal linking test. Start with control groups: identify pages that won’t receive new links but share similar characteristics with your test pages, letting you separate link effects from site-wide traffic shifts. Isolate one variable at a time. If you’re testing anchor text optimization, don’t simultaneously adjust link placement or add multiple links to the same target pages.

Account for temporal factors by running tests long enough to capture weekly patterns and, ideally, seasonal variation. A two-week test that happens to include a holiday or major news event affecting search behavior will probably show misleading results. Track and document all site-wide changes during your test window: CMS updates, other SEO initiatives, content refreshes on non-test pages, or technical modifications that might influence crawl behavior or rankings.

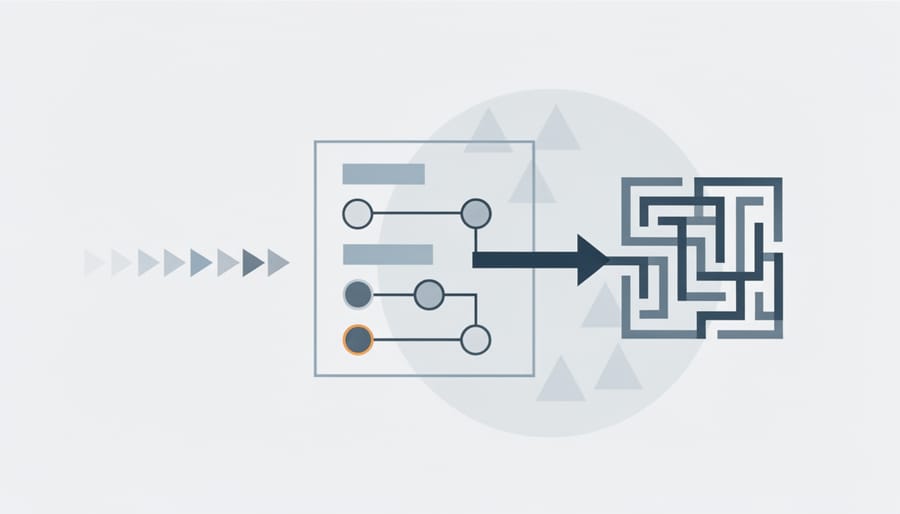

Test design pipeline

Establish statistical baselines using historical performance data before making changes. Calculate expected traffic ranges, click-through rates, and ranking distributions so you can distinguish meaningful shifts from normal fluctuations. Compare your test pages against both their own history and control group performance.

Consider running pre-post analysis with holdout groups. Split your candidate pages into test and control cohorts matched by current performance metrics, then apply changes only to the test group. This paired comparison strengthens causal claims about what your internal links actually accomplished versus what would have happened anyway.

Document everything: which pages received links, when changes went live, what other site activity occurred, and how you selected test versus control groups. This record becomes essential when interpreting results or defending conclusions to stakeholders.

How to Improve External Validity Without Losing Control

You don’t need to choose between rigorous control and real-world applicability. The key is systematic expansion: start narrow, then broaden deliberately.

Test across multiple page types. If you validated anchor text impact on blog posts, replicate the pattern on product pages and category pages. Different content structures, user intents, and crawl depths may produce different outcomes. Document what stays consistent and what changes. Moz’s archive of public SEO tests is a decent calibration sample for how often a finding on one page type fails to transfer to another, in my experience, more often than the original write-ups acknowledge.

Vary your anchor text patterns. Don’t just test exact-match versus branded. Include partial matches, LSI variations, and contextual phrases. This is the step that reveals whether your findings depend on one specific implementation or actually represent a broader principle.

Replicate on different site sections. Run the same test structure in both high-authority and low-authority areas of your site. Test on new versus established pages. These variations help you understand boundary conditions: where does the effect hold, and where does it weaken?

Document context variables that might affect outcomes. Record page age, existing backlink profiles, content length, topical relevance, and crawl frequency. You’re building a map of moderating factors. When results differ across contexts, these notes help explain why and inform future prediction.

The strategy: preserve your core control group design while systematically introducing variation in non-essential factors. Each replication that confirms your finding expands its generalizability. Each deviation reveals important nuance about when and where the principle applies.

Putting It Together

The distinction matters most when making decisions. Reach for internal validity when you need ironclad proof that a specific change caused an observed outcome, essential for diagnosing what broke or validating a controversial recommendation to stakeholders. Prioritize external validity when you need confidence that a tactic will perform consistently across multiple pages, site sections, or client accounts, critical before rolling out resource-intensive changes at scale.

✓

Worth re-running for

- ›A confirmed core update landed inside your test window

- ›Test pages were selected because they were underperforming (regression risk)

- ›Simultaneous content edits or technical fixes shipped to test pages

- ›Effect size is within normal weekly noise of your baseline

- ›Result is dramatic enough that stakeholders will resource a rollout based on it

✗

Accept the result for

- ›Matched control group, no update in window, effect comfortably above noise floor

- ›Pre-registered hypothesis and analysis plan

- ›Effect persists through at least two seasonal cycles or two months past launch

- ›Control pages, drawn from the same baseline band, did not move

- ›Result is consistent with an independent replication on another page type

Neither type exists in pure form. Every experiment trades off some causal clarity for broader applicability or vice versa. In practice, run tightly controlled experiments to establish causation first, then deliberately relax controls in follow-up tests that sacrifice precision for scope, confirming your intervention works beyond the original context. This two-phase approach lets you prove it works, then prove it generalizes, giving you both the mechanistic understanding to troubleshoot failures and the empirical confidence to invest in winners across your portfolio. I’d argue most teams skip phase two because phase one looked so clean. That’s the mistake.

Try it this week

Re-audit your most recent “winning” internal-linking test before you scale it.

-

1

Pull the test window from your analytics. Cross-check against Google’s Search Status dashboard for any confirmed update inside that window. -

2

Identify a true matched-control cohort, pages from the same baseline performance band that did not receive links. Plot both lines together. -

3

Pick one page type or site section you haven’t tested yet and replicate the design there before approving the full rollout.

If the effect survives the re-audit and the replication, scale it. If it doesn’t, you’ve saved a quarter of wasted implementation work, which is the entire point of doing the test in the first place.

Related guides

- Six Months of Internal-Link Testing, the long-form companion piece on what changed in our campaign design after running the framework above end to end.

- Z-Test Assumptions for Link Tests, the statistical layer underneath the validity question, when your significance test is quietly violating its own premises.