Why Your Structured Data Still Isn’t Triggering Rich Results (Testing Strategies That Actually Work)

So your markup validates clean. The Rich Results Test gives you a green checkmark, every required property is present, the JSON-LD parses without a hiccup, and yet two months later the SERP still shows a plain blue link. If you’ve shipped structured data more than a handful of times, you know the feeling. Validation is a syntax check. Eligibility is a Google decision (and those two things aren’t the same thing, no matter what the green checkmark suggests). This guide walks through the testing strategy that closes the gap between “the tools say it’s eligible” and “the SERP actually renders a rich result,” with the diagnostic moves that work when validators run out of road.

What Structured Data Testing Actually Measures

Structured data testing measures two distinct things that get conflated all the time: validation and eligibility. Validation confirms your markup is syntactically correct, proper JSON-LD formatting, required properties present, values in expected formats. Eligibility determines whether Google will actually display rich results based on that markup, which depends on content quality signals, policy compliance, and search-result diversity algorithms that validation tools cannot assess.

Quick vocabulary

- Rich result

- A search result enhanced beyond the standard blue link, stars, recipe cards, FAQ accordions, event dates, sitelinks. Driven by structured data plus Google’s eligibility checks.

- Structured data

- Machine-readable metadata (usually JSON-LD) embedded in a page to describe its content type, properties, and relationships. The substrate rich results are built on.

- Validator vs renderer

- The validator (Schema.org / Rich Results Test) checks syntax and required fields. The renderer (Google’s live indexing pipeline) decides whether to actually show the rich result. Two different judges.

- Schema spam-flag heuristic

- Google’s quiet quality filter that suppresses rich results when markup-to-content ratio looks manipulative, ratings on thin pages, FAQ markup stuffing keywords, hidden review widgets.

- Manual review queue

- The internal Google process where flagged markup gets escalated for human review, often invisible to publishers until a manual action lands in Search Console.

Here’s the practical difference. A recipe page might pass Google’s Rich Results Test with zero errors, showing all required properties (ingredients, cook time, ratings) in valid schema.org format. That’s validation success. But if the recipe content is thin, duplicated from another site, or Google already shows three recipe cards for that query, your markup won’t trigger rich results. Validation passed, eligibility failed. Watched this happen on a client’s recipe site for nine straight months. Perfect markup, zero stars.

Most testing tools only check validation. They cannot predict the eligibility decision that happens server-side, using signals invisible to any validator.

This gap explains the common frustration, “my structured data has no errors, but I don’t see stars in search results.” The validator confirmed your syntax. It said nothing about whether Google considers your content rich-result-worthy for competitive queries. Effective testing requires both checks. Validators catch implementation mistakes. Real-world monitoring through Search Console and SERP tracking reveals eligibility. The distinction shifts troubleshooting from “fix the code” to “improve the content and context.”

The Three-Layer Testing Framework

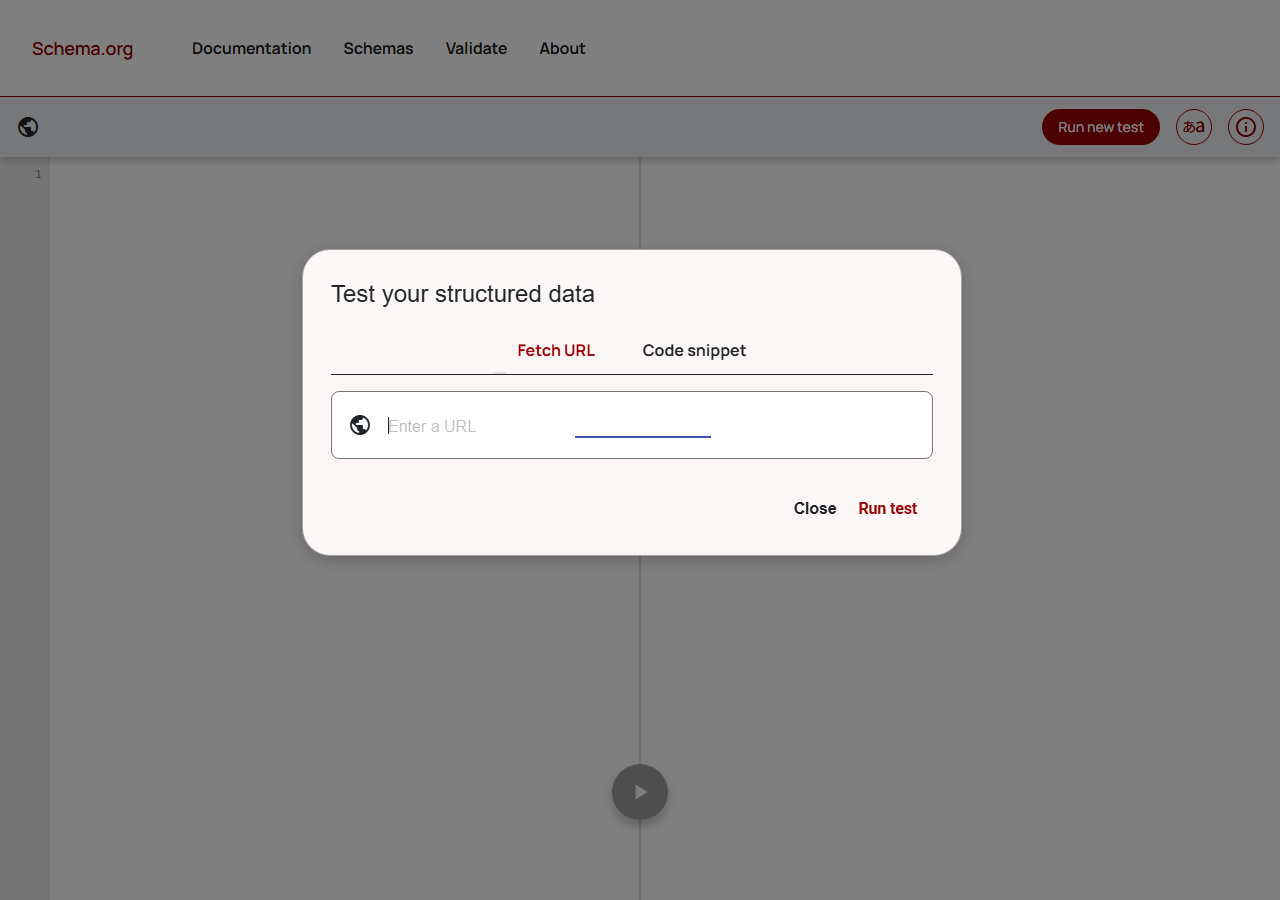

Layer 1: Syntax and Schema Compliance

Before structured data drives rich results, it must clear basic technical checks. Schema.org’s validator and Google’s Rich Results Test serve different functions, the former confirms your JSON-LD or microdata follows specification rules, while the latter verifies Google can parse it and considers it eligible for enhanced display. Both catch syntax errors (malformed JSON, invalid property names, incorrect nesting) but the Rich Results Test adds policy enforcement, flagging markup that’s technically valid but violates Google’s guidelines. Moz’s primer on schema and structured data covers the spec-level layer in detail, worth bookmarking when you’re onboarding a new dev.

Common failures include missing required properties (like “image” on Article markup or “priceRange” on LocalBusiness), using deprecated types, or nesting properties under the wrong parent object. A Restaurant schema might validate on Schema.org but fail Google’s test if “address” lacks “streetAddress” or “addressLocality.” These tools report line-specific errors, which makes fixes straightforward. When the issue is purely structural, anyway. Most of the time it isn’t.

Pro tip

When the two validators disagree, take Google’s verdict as gospel for SERP purposes, but read the Schema.org validator’s output to understand why Google might be applying a stricter policy than the spec demands. The delta is usually a content-quality or policy filter, not a typo.

A minimal valid JSON-LD block for a recipe looks like this, useful as a reference when you’re paring back a bloated implementation to figure out what’s actually triggering the validator:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Recipe",

"name": "Classic Chocolate Chip Cookies",

"image": "https://example.com/cookies.jpg",

"author": { "@type": "Person", "name": "Jane Doe" },

"datePublished": "2026-04-12",

"prepTime": "PT15M",

"cookTime": "PT12M",

"recipeIngredient": ["2 cups flour", "1 cup butter", "..."],

"recipeInstructions": [

{ "@type": "HowToStep", "text": "Preheat oven to 350F." }

],

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.8",

"reviewCount": "127"

}

}

</script>Passing both validators is necessary but insufficient. Markup can be perfectly formed yet still invisible in search results due to content quality, policy violations, or indexing issues the next layers address.

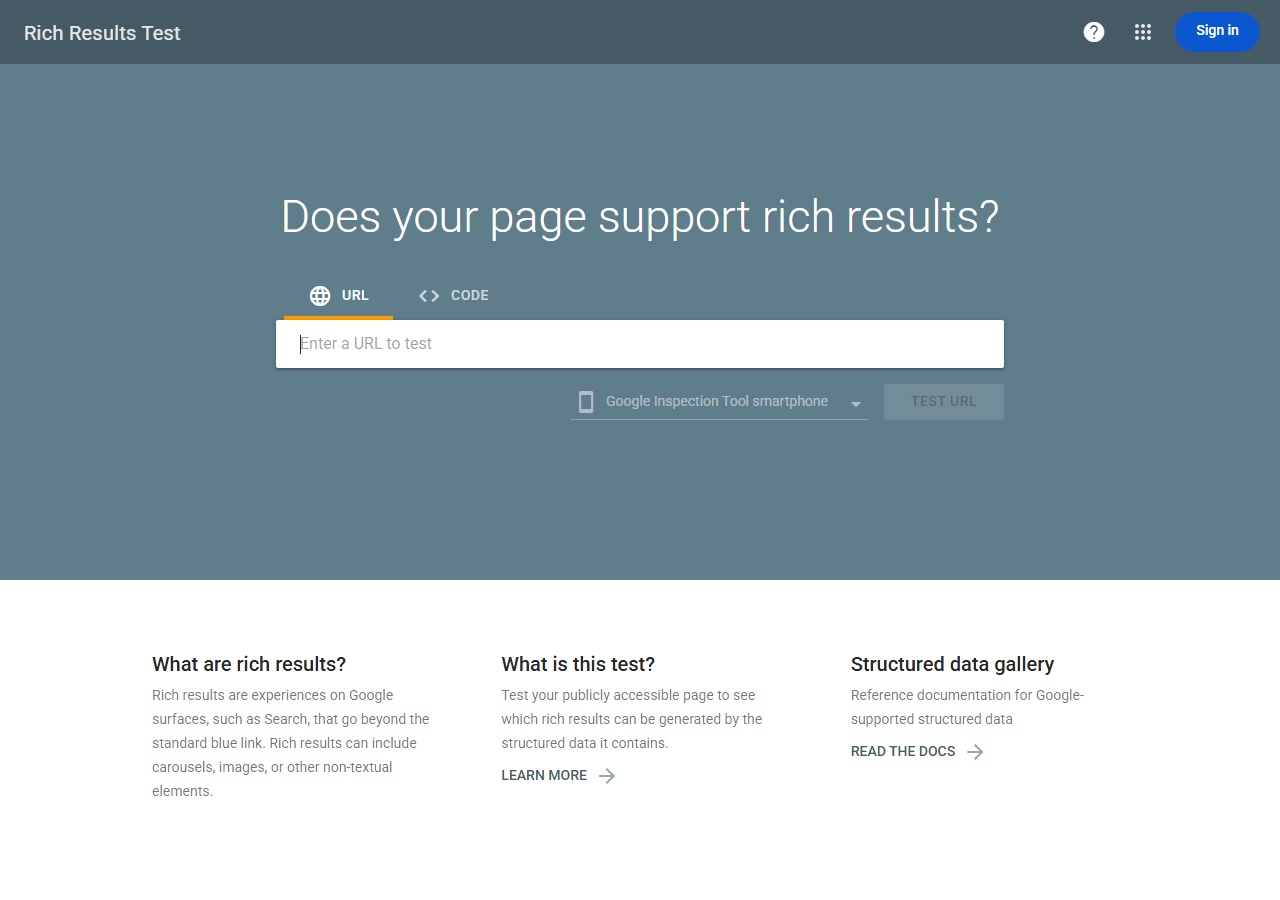

Layer 2: Eligibility and Preview Checks

Passing schema validation doesn’t guarantee rich results. Google applies separate eligibility criteria (content type, policy compliance, competitive thresholds) that determine whether markup qualifies for display. A valid Recipe schema might fail if it lacks required images, aggregate ratings, or sufficient cooking-time detail. Testing at this layer means checking whether your specific markup type meets Google’s undocumented quality bars.

The URL Inspection Tool in Search Console reveals how Google actually parses your page. Pop a URL in, see detected structured data types, eligible enhancements, and reasons for ineligibility. A page might pass the Rich Results Test but show “Not eligible” here because the tool evaluates against live index data and current ranking signals, not just syntax. Ahrefs has a useful breakdown of which schema types map to which SERP features, worth referencing when you’re scoping which markup is even worth testing for a given page template.

Why this layer matters, it exposes the gap between technically correct markup and SERP-worthy content, showing what Google sees versus what validators approve. Compare detected features against Search Console’s Enhancement reports to identify patterns. Recipe carousels may require five-star ratings in your niche, while FAQ markup might face manual action filters. (In my experience, FAQ markup quietly stopped triggering for informational pages around mid-2023, even when the validator still calls it eligible. The bar moved, the validator didn’t.)

Layer 3: Live Performance Monitoring

Validation confirms your markup is syntactically correct, but only Search Console reveals whether Google actually displays rich results. The Performance and Enhancements reports show impressions, clicks, and issue counts for each structured data type you’ve deployed. Monitor the gap between indexed pages with valid markup and those earning enhanced SERP features. Drops often signal content-quality thresholds, policy violations, or competing markup conflicts that validators miss. Cross-reference Coverage reports to confirm Google crawls and indexes pages before diagnosing why eligible content doesn’t surface as rich results. Like testing in production environments, real-world performance data trumps synthetic checks.

Watch for

Search Console’s Enhancement reports lag actual rendering by 3-7 days, sometimes longer for low-traffic pages. Don’t treat the report as real-time, treat it as a delayed mirror. The fastest signal is a manual URL Inspection on a freshly crawled URL.

When Validators Pass But Rich Results Don’t Show

The pattern is consistent across markup types, the validator says “eligible,” the SERP says nothing. Here’s the diagnostic comparison, laid out by what each scenario looks like in the wild:

| Symptom | Validator passes + rich result shows | Validator passes + nothing shows |

|---|---|---|

| Content depth | Original recipe, 800+ words, photographed dish, named author | Thin aggregation, stock imagery, anonymous byline |

| Review source | Third-party verified (Trustpilot, Google Reviews) plus on-site reviews | First-party reviews only, no verification trail |

| Domain trust | Established brand, varied backlink profile, consistent publishing | Young domain, narrow link profile, sparse publishing history |

| SERP competition | Vertical has room for 5-10 carousel slots, modest aggregator presence | Eventbrite / Ticketmaster / Amazon dominate, rich slots saturated |

| Markup-to-page match | Marked-up entity is the primary subject of the page | Markup describes sidebar content or a secondary widget |

| Policy alignment | Clean against Google’s spam policies, no hidden content | Hidden reviews, FAQ keyword-stuffing, undisclosed sponsorship |

Recipe Markup That Google Ignores

A food blogger added Recipe structured data to a post featuring a classic chocolate chip cookie recipe. The markup passed Rich Results Test and Search Console validation without errors, correct ingredient lists, prep times, nutrition facts, and properly nested fields. Yet six months later, the page never earned a rich result in search.

The likely culprit (and I’d bet money on this one) is content-quality signals Google layers on top of technical compliance. The post aggregated an existing recipe with minor tweaks, used stock photography from a free image site, and offered minimal original instruction or context. Google’s recipe guidelines explicitly prefer original recipes with high-quality images showing the actual finished dish. Backlinko’s analysis of rich snippet patterns echoes the same point, the snippets that survive are tied to pages with original depth, not minimum-viable markup.

This case illustrates the validator gap, tools check syntax and schema rules but cannot evaluate content originality, image provenance, or user value. A recipe can be technically flawless yet commercially invisible if it lacks the editorial substance Google rewards. Testing structured data means auditing both code correctness and the underlying content that markup describes. Thin aggregation rarely surfaces, regardless of perfect JSON-LD.

Review Stars Filtered by Trust Signals

Real example. An e-commerce site implemented AggregateRating schema for product reviews. Markup validated perfectly in Google’s Rich Results Test, showed no errors, and appeared in the preview. Yet stars never appeared in search results after weeks of monitoring. Not one.

The root cause wasn’t technical. Google’s algorithms filtered the ratings due to trust signals, reviews were sourced exclusively from the merchant’s own site without third-party verification, and the business lacked established credibility signals like significant web mentions or authoritative backlinks. The validator confirmed structural correctness but couldn’t assess content quality or source legitimacy.

Note

First-party review stars on small e-commerce sites have been quietly demoted since roughly 2019. If your validator says “eligible” and you have zero third-party verification, assume the spam-flag heuristic is suppressing the result and plan a Trustpilot or Google Customer Reviews integration before re-testing.

Testing revealed the gap when comparing validator output against live SERP performance over 30 days. No stars appeared despite valid markup. Investigation showed similar established competitors with identical schema but verified review platforms (Trustpilot, Google Customer Reviews) consistently displayed stars. The fix required operational changes beyond code, integrating a verified third-party review platform and building merchant credibility through authentic customer feedback channels. Stars appeared within two weeks after implementation, demonstrating that structured data testing must extend beyond syntax validation to include trust factors and competitive benchmarking.

Event Schema Suppressed by Competition

A national comedy club chain implemented valid Event schema across 40 venues, passed Rich Results Test with zero errors, yet earned rich snippets for only 8% of event pages in search. The culprit, vertical saturation. Ticketmaster, Eventbrite, and StubHub dominated event-related queries with identical structured data, superior domain authority, and deeper link profiles. Eventbrite’s SimilarWeb traffic profile alone gives you a sense of the wall, hundreds of millions of monthly visits against a regional venue’s thousands.

Testing revealed that technical correctness guarantees eligibility but not visibility. Google’s ranking algorithms still prioritize trust signals when choosing which marked-up page deserves the enhanced display. The club’s schema worked perfectly in technical terms but competed against platforms with ten-year indexing histories and millions of backlinks. For businesses in crowded event spaces, schema markup functions as table stakes rather than competitive advantage. Honestly, in some verticals it’s barely even that. The solution required both valid markup and traditional SEO investment, content freshness, local citations, and earned media, to climb into the rich-result threshold against entrenched aggregators.

The Test, Diagnose, Iterate Cycle

Validators don’t tell you what to do when the SERP stays plain, so the actual workflow is iterative, ship the markup, watch the live performance window, diagnose the gap, then make one content or trust change at a time so you can attribute the effect. Here’s the cycle in four moves:

Test, diagnose, iterate workflow

The two failure modes I see most often. Teams either iterate too fast (changing three things in a week and learning nothing) or treat the first validator pass as the finish line and walk away. Both kill the feedback loop in different ways. The fix is patience plus single-variable changes. Moz’s structured-data guide is reasonable on the spec side but undersells how long the eligibility window actually takes to settle. Plan for weeks, not days.

Testing Tools and Their Blind Spots

Google Rich Results Test validates whether your markup qualifies for visual enhancements in search results. It catches syntax errors and type mismatches but misses critical disqualifiers like hidden content, conflicting data across the page, or policy violations that prevent actual display. Test passes don’t guarantee rich results, they only confirm eligibility.

Schema Markup Validator performs technical validation against schema.org specifications. It identifies malformed JSON-LD, missing required properties, and incorrect data types. What it doesn’t catch (and this is the part that tripped me up for years) is relevance issues like marking up sidebar content instead of primary content, duplicate entities on a single page, or semantic mismatches where technically valid markup describes the wrong thing.

Pro tip

Run a quarterly site-wide crawl with a structured-data extractor (Screaming Frog and similar crawlers can dump every JSON-LD block on the site to a CSV). Diff the markup against the rendered HTML. Mismatches between schema price and on-page price, schema author and rendered byline, or schema event date and visible event date are the single most common silent eligibility killer.

Search Console’s Rich Results report shows what Google actually indexed and whether it triggered enhancements. It reveals the gap between validation and production, markup that passed testing but failed in live crawls due to rendering problems, noindex tags, or content quality filters. Check the Enhancement reports for warnings about unparseable structured data or items excluded due to guidelines. This tool exposes real deployment failures after the fact but lacks the diagnostic detail to identify root causes quickly.

The blind spot all three share, they can’t predict whether valid, indexed markup will actually display rich results for your queries. Google applies query-specific relevance filters and competitive thresholds that no validator simulates. Your markup might be technically perfect yet invisible in SERPs if competitors have stronger signals or if Google deems enrichment unnecessary for that search context. This is why SERP performance optimization requires monitoring actual search appearances, not just passing validation tests.

Building a Repeatable Testing Workflow

Establish a pre-launch checklist before deploying structured data to production. Start with validator tools (Google’s Rich Results Test, Schema.org validator) to confirm syntax, then test in staging using real URLs through Search Console’s URL Inspection tool. This reveals whether Google can actually render and parse your markup in context. Validators alone miss JavaScript rendering issues and crawl-accessibility problems.

For deployment, use staged rollout verification by implementing markup on a subset of pages first. Monitor Search Console’s Rich Results report for 7-14 days, watching for error spikes or warnings. Compare impression and click-through data for marked-up pages against control pages to measure actual SERP impact, not just technical validity.

Automate ongoing monitoring through the Search Console API to track eligible versus enhanced impressions at scale. Set up alerts when error rates exceed thresholds or when previously eligible pages lose enhancement. For large sites, build custom scripts that periodically fetch live pages, extract structured data, and validate against your schema requirements, catching drift before Google does.

Create a testing matrix that covers edge cases, minimum content thresholds, required versus recommended properties, and variant page templates. Document which properties trigger enhancements versus which only satisfy validators. This distinction matters because passing validation doesn’t guarantee rich results, and understanding the gap between technical compliance and SERP enhancement is what separates effective testing from checkbox exercises.

Putting Rich-Results Testing to Work

Structured data testing isn’t a checkbox you tick once, it’s ongoing detective work. Validators confirm syntax, only live monitoring reveals whether Google actually displays your rich results. Treat eligibility as a moving target. Algorithm updates shift goalposts, competitors crowd categories, and content changes can break previously working markup. The gap between “valid” and “visible” closes only through continuous observation, not periodic audits.

✓

Worth iterating on

- ›Pages where validator says eligible but Search Console shows zero rich-result impressions

- ›Markup types where competitors with similar authority are already winning rich results

- ›Recently-deployed schema still inside the 2-4 week observation window

- ›Cases where the diagnostic points to content depth or trust, not code

- ›High-traffic templates where even a partial rich-result lift moves real revenue

✗

Move on from

- ›Verticals where 3+ aggregators saturate the carousel for every relevant query

- ›Markup types Google has quietly deprecated or stopped rendering (much of FAQ)

- ›Thin pages where the underlying content can’t pass a quality bar regardless of schema

- ›Domains with active manual actions, fix those first, schema later

- ›Anything you’ve already iterated on three times with no Enhancement-report movement

Build this into your workflow selectively. During routine link and on-page audits, flag pages where structured data exists, validators pass, and rich results still don’t appear. Those are the candidates for the diagnostic cycle above. Skip the rest. The time you save not chasing dead markup is time you can spend on content depth and trust signals, which are probably the actual fix anyway.

Try it this week

Pick three pages with valid schema and no rich result. Run the diagnostic cycle on each.

-

1

Pull three URLs from Search Console where the Enhancement report shows eligible markup but zero rich-result impressions over the last 28 days. -

2

For each, run the URL Inspection Tool plus a competitor SERP scan. Note who is winning the rich result and what they have that you don’t, third-party reviews, content depth, original imagery, link authority. -

3

Change one variable on one page (not all three). Wait two weeks. If the rich result lands, replicate the change across the other two. If it doesn’t, move that page to the “move on” pile and reallocate.

Three pages, three weeks, one variable each. That’s the difference between “we tested our schema” and a documented eligibility playbook you can hand to the next person who deploys markup on the site.

Related guides

- Core Web Vitals Testing in Production, Why staging passes and production fails, and how to test what users actually see.

- Migration Systems That Preserved SEO Traffic, Staged-rollout discipline that applies to schema deployments too.