Analytics Tags That Get Your Pages Deindexed (And How to Fix Them)

Audit your existing analytics implementations by scanning all site headers for Google Analytics IDs, Tag Manager containers, Facebook Pixels, and other tracking codes that appear across multiple properties—identical tracking identifiers shared between sites create detectable footprints that search engines can use to map network relationships. Replace shared tracking accounts with property-specific instances, implement server-side tagging to mask client-side code patterns, or use tag management solutions that randomize implementation signatures across domains. Deploy different analytics providers across your network rather than using the same platform everywhere, stagger implementation methods (some sites with gtag.js, others with analytics.js legacy code, others with Tag Manager), and vary the placement depth of tracking scripts in your HTML structure. Examine your Google Search Console for manual action notifications related to “unnatural links” or “thin content” that might correlate with tracking footprint discovery, and cross-reference deindexation timing against when you deployed unified tracking systems. For high-value networks, consider abandoning traditional analytics entirely on satellite sites in favor of server log analysis or privacy-focused alternatives that don’t leave JavaScript fingerprints, accepting reduced data granularity as the cost of maintaining anonymity between properties.

What Analytics Footprints Actually Mean

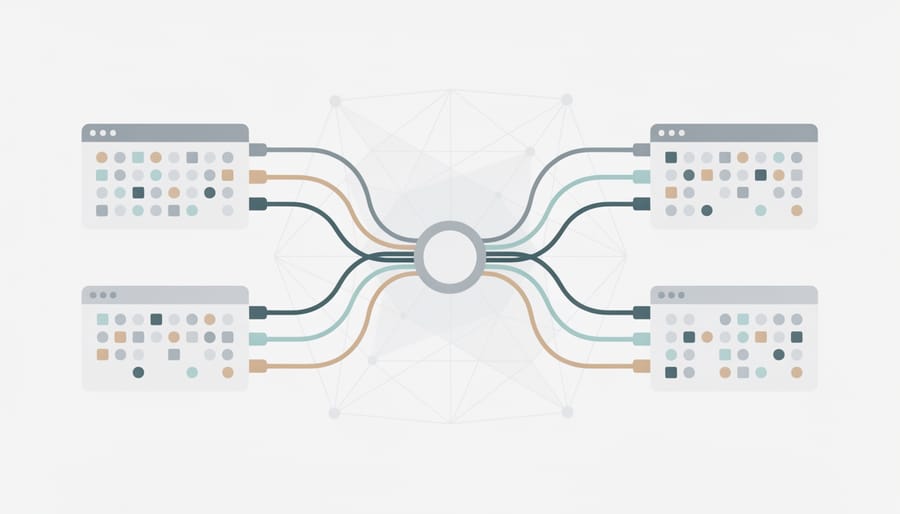

Analytics tagging footprints are the detectable patterns left when multiple sites share identical tracking codes, tag manager configurations, or third-party script implementations. When site A and site B both fire the same Google Analytics property ID, use identical GTM container structures, or load matching sequences of advertising pixels, they create a technical signature that links them together.

Search engines can identify these shared fingerprints by crawling JavaScript, inspecting HTTP headers, and analyzing which external resources load on each page. A site network running fifty domains might inadvertently reveal the entire operation through a single reused Facebook Pixel ID or a consistent pattern of Hotjar and Mixpanel implementations across all properties.

The footprint risk compounds when combined with other signals. Identical analytics tagging plus shared hosting plus similar content patterns creates a stronger correlation than any single factor alone. Even sophisticated networks stumble here because tagging decisions often happen at the infrastructure level, making it easy to deploy the same monitoring stack across dozens of sites without considering the visibility cost.

Common footprint sources include property IDs embedded in page source, tag manager container numbers visible in network requests, and the specific combination and firing order of marketing tags. Each creates a machine-readable signature that can map relationships between domains at scale.

Why Search Engines Flag Shared Analytics IDs

The PBN Detection Problem

Search engines discovered that private blog networks often share the same Google Analytics ID, AdSense code, or other tracking identifiers across dozens or hundreds of sites. This created a detectable fingerprint. When site operators reused GA-12345678-1 on twenty seemingly unrelated blogs linking to the same money site, pattern-matching algorithms could connect them instantly. The same tracking code that measured visitor behavior became evidence of common ownership and coordinated link schemes. Google filed patents describing methods to identify related sites through shared embedded resources, including analytics scripts. Security researchers published tools that scraped Google Analytics IDs from public HTML and mapped link networks automatically. What began as a convenience for centralized reporting became the easiest way to expose manufactured link ecosystems at scale.

False Positives That Hurt Legitimate Networks

Multi-location franchises, marketing agencies managing client portfolios, and web design firms often share a single analytics property across domains for legitimate operational reasons. Search engines can’t always distinguish intent—identical tracking codes, shared tag manager containers, or common remarketing pixels create the same fingerprint as manipulative networks. The consequences hit hardest when a single client site in an agency’s portfolio draws scrutiny, potentially exposing dozens of unrelated properties. Mitigation requires treating each domain as independent: separate analytics properties, unique GTM containers, and distinct tracking configurations even when consolidated reporting matters internally. For agencies and multi-site operators, the administrative overhead is real but necessary to protect yourself from penalties targeting perceived manipulation.

Common Tagging Mistakes That Create Risk

Using One Analytics Property Across Multiple Domains

The most efficient way to track multiple sites is often the riskiest: reusing a single Universal Analytics or GA4 property across your entire network. When dozens or hundreds of domains share one tracking ID, they broadcast their relationship to anyone who checks. Competitive researchers, SEO auditors, and search quality teams routinely scan for shared analytics codes because they’re trivially easy to find in page source or browser dev tools. Each property generates a unique identifier that appears in JavaScript tracking code, making cross-site connections instant and verifiable. This footprint persists even if you later remove the tags, since crawlers and archive services preserve historical snapshots. The practice remains common because it simplifies dashboard management and aggregate reporting, but that convenience trades away operational security. If discovery matters for your network, shared properties create the clearest possible signal that sites operate under common ownership or coordination.

Identical GTM Container Patterns

Google Tag Manager configurations often expose ownership patterns across supposedly unrelated sites. When multiple domains share identical container IDs, trigger sequences, or custom variable naming schemes, search engines can map network relationships. Particularly revealing: custom dimension slots assigned to the same parameters (like author ID in slot 3, site network identifier in slot 7), identical dataLayer variable names with unusual formatting, and synchronized tag firing sequences that match across properties. Even seemingly innocuous choices—how form tracking is structured, which click elements trigger events, the exact naming convention for scroll depth milestones—create recognizable fingerprints. Why it’s interesting: These technical implementation details often persist because teams copy working GTM containers as templates when launching new sites, inadvertently creating clustering signals that algorithmic detection can exploit. For: SEO auditors, network operators reviewing cross-site tracking patterns.

Third-Party Script Fingerprints

Heat mapping services like Hotjar and Crazy Egg inject distinctive JavaScript that creates cross-site fingerprints when the same account ID appears on multiple domains. Search engines can trivially identify these shared identifiers in page source and HTTP headers. A/B testing platforms (Optimizely, VWO, Google Optimize) operate similarly—identical experiment containers signal common ownership. Affiliate tracking pixels present double risk: the pixel itself links sites together, and many affiliates inadvertently use the same subID or campaign parameters across their network properties. Mitigation requires either separate accounts for each property or server-side implementations that don’t expose shared identifiers in client-side code. For network operators: audit view-source on each domain for matching script IDs, then isolate or proxy third-party tools through unique endpoints.

Server-Side Tagging Leaks

Server-side Google Tag Manager routes requests through your own infrastructure, masking third-party domains from browser view. But shared implementation patterns still leak connections: identical custom subdomain structures (analytics.domain1.com, analytics.domain2.com), synchronized GTM container updates across networks, and predictable tagging server paths all create recognizable signatures. Cloud provider IP blocks and SSL certificate patterns further expose related properties when multiple sites use the same tagging architecture or shared compute resources.

How to Audit Your Current Setup

Tools for Detecting Shared Tracking Codes

Several free and commercial tools help you spot shared analytics codes across domains—critical for auditing your own network or researching competitors’ structures.

BuiltWith reveals technology stacks including Google Analytics IDs, Tag Manager containers, and Facebook Pixels on any site. Enter a domain to see current tracking implementations, or use the paid Relationship feature to find all sites sharing the same code. Why it’s interesting: Shows both what’s installed and when it was added, helping you spot recent changes. For: SEO auditors, network operators.

SimilarTech and SimilarWeb offer comparable tracking code discovery with additional traffic context. Both let you search by specific analytics IDs to map networks, though detailed lookups require paid plans.

Manual source inspection remains surprisingly effective. Right-click any page, select “View Page Source,” and search for “UA-,” “GTM-,” or “G-” to find Google codes, or “fbq” for Facebook Pixel. Check multiple pages across suspected network sites and document matches in a spreadsheet. Why it’s interesting: Zero-cost method that works when automated tools hit paywalls or miss implementations. For: Bootstrap operators, researchers validating automated findings.

Browser extensions like Ghostery and Wappalyzer provide quick at-a-glance detection while browsing, useful for spot-checking during routine site reviews.

What to Look For in Your Tag Manager

Open your tag manager configuration and look for shared identifiers that repeat across properties. The most detectable pattern is identical container IDs deployed on supposedly unrelated sites—a clear signal you’re managing multiple properties from one account. Check your custom JavaScript variables and triggers: if they use identical naming conventions, fire sequences, or event structures, they create recognizable fingerprints. Examine your dataLayer implementation for consistent variable names and push timing patterns that match across domains. Review your tag firing rules—uniform logic for tracking pageviews, clicks, or conversions suggests centralized management. Custom HTML tags with identical formatting, comments, or vendor scripts reveal shared authorship. Even your folder organization within GTM can leak information if exported configurations show matching structures. The risk compounds when these elements appear alongside other footprints like shared hosting, similar content patterns, or cross-linked properties. Most operators inherit these patterns from templates or agencies, never realizing they’ve created a detectable signature.

Safe Analytics Implementation for Multi-Site Operations

Unique Properties Per Domain

Each domain in your network requires its own isolated analytics property with completely separate credentials. Create distinct Google accounts using different email providers—one might use Gmail, another ProtonMail, another a custom domain email. Never share login credentials across properties or link them through Google’s account-linking features.

Payment methods matter when building a PBN. If upgrading to paid analytics tiers, use different credit cards or payment processors for each account. Google can match billing information across properties, creating exactly the footprint you’re trying to avoid.

Store credentials in separate password manager vaults or use distinct browser profiles for each property. This operational separation prevents accidental cross-contamination and ensures each domain maintains its own tracking identity with zero administrative overlap visible to search engines.

Randomizing Tag Manager Configurations

Varying how you structure tags across properties breaks visual patterns that network detection algorithms exploit. Assign triggers semantically distinct names—”page_view_01″ on one site, “pageload_tracker” on another, “view_event” on a third—rather than using identical conventions everywhere. Reorganize variable hierarchies: flatten structures on some properties, nest deeply on others, or use different naming schemes (camelCase, snake_case, descriptive phrases). Shuffle folder organization so related tags don’t cluster identically across sites. Randomize custom JavaScript variable names and adjust firing priorities so execution order differs. Change how you handle consent management and cookie domains per property. This granular variation requires more setup documentation but eliminates the synchronized configurations that flag automated deployments. Why it’s interesting: most operators focus on randomizing tag IDs while leaving structural fingerprints intact. For: network operators, affiliate managers, SEO teams managing multiple client properties.

When Server-Side Tracking Helps (and When It Doesn’t)

Server-side Google Tag Manager moves tracking requests from browsers to your server, which can reduce client-side JavaScript footprint and bypass some ad blockers. The privacy win is real but limited: your GTM container ID, measurement IDs, and cookie values still create linkable patterns across domains if you reuse them. Server-side setups help when you customize endpoint URLs, randomize identifiers per property, and control cookie domains carefully. They don’t help if you route everything through the same Cloud Run instance with identical configuration or send the same GA4 measurement ID to Google’s servers from fifty sites. The architecture shifts where data flows, not whether it creates footprints. For network operators, server-side is useful infrastructure but not a magic shield against fingerprinting.

What to Do If You’ve Already Created Footprints

If you’ve already deployed shared analytics IDs or tag manager containers across a network, the fix isn’t instant but it’s manageable. Start with an audit: catalog every site in your network and document which tracking codes appear where. Use Google Analytics property settings or Tag Manager container lists to identify overlap. This inventory forms your migration roadmap.

The safest recovery path creates unique tracking per site. For Google Analytics, generate new properties for each domain. For Tag Manager, deploy separate containers. Yes, this fragments your data initially, but cross-domain tracking configurations and GA4’s rollup properties can restore consolidated reporting without creating footprints. Plan the migration in batches rather than switching everything simultaneously, which reduces technical risk and makes troubleshooting easier.

Expect the transition to take 4-8 weeks depending on network size. Implement new tracking codes first, run dual tracking briefly to verify data accuracy, then remove the shared identifiers. Don’t delete old properties immediately; archive them for historical reference. Monitor search performance during and after migration, though attribution is tricky since Google rarely confirms specific penalties.

If deindexation has already occurred, removing the shared tracking won’t guarantee reinclusion. You’ll need broader remediation: diversify hosting infrastructure, ensure unique content, vary site structure, and clean up toxic links between network properties. Submit reconsideration requests only after confirming footprints are genuinely eliminated, and document the specific changes made. Recovery timelines vary from weeks to months, depending on how aggressively Google flagged the network.

Clean analytics hygiene isn’t optional if you operate at scale or manage link networks. Shared tracking IDs create discoverable fingerprints that connect properties you’d prefer to keep separate, giving search engines a ready-made map of your footprint. The fix is straightforward: isolate tracking per property, randomize implementation patterns, verify tag isolation through cross-domain testing, and run quarterly audits before algorithmic updates surface the problem. Proactive tag hygiene costs hours now but prevents deindexation events that cost months of recovery work later. Treat analytics implementations as operational security, not an afterthought.