How IaC Stops Your Scraping Infrastructure From Breaking at 3 AM

Infrastructure as Code treats your scraping infrastructure—proxies, scrapers, storage buckets, rotating IP pools—as version-controlled text files that rebuild environments automatically. Instead of manually configuring servers or clicking through cloud dashboards when a proxy pool fails at 2am, you define everything in declarative scripts that recreate your entire scraping setup in minutes.

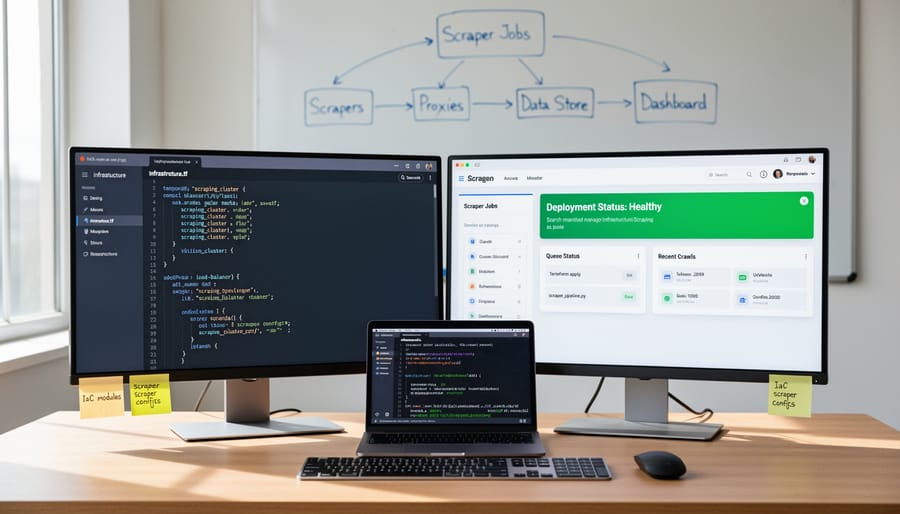

Define your proxy rotation service, scraper instances, and data pipelines in Terraform or Pulumi configuration files that live in your repository. When a proxy provider changes their API, you update one configuration block and redeploy across all environments. Your scraping infrastructure becomes reproducible: new team members spin up identical staging environments with a single command, and disaster recovery means running the same script that built your production setup initially.

This matters for scrapers managing dozens of targets because manual configuration doesn’t scale. You need consistent environments across development, testing, and production. You need quick rollbacks when a configuration change breaks your data pipeline. You need documentation that’s never out of sync because the code is the documentation.

IaC transforms scraping operations from fragile, undocumented server configurations into tested, versioned infrastructure that survives team changes and provider migrations. For small scraping teams, it’s the difference between spending weekends debugging mystery configuration drift and confidently deploying infrastructure changes during business hours.

What IaC Actually Means for Scraping Operations

Infrastructure as Code means defining your entire scraping setup in text files that machines can execute automatically. Instead of manually clicking through cloud dashboards to launch a proxy server, configure a scraper, or set up a database, you write declarative configuration files that describe what you need. One command then spins up proxies, deploys scrapers, provisions storage, and connects monitoring—every time, identically.

For scraping operations, this matters because your infrastructure needs change constantly. A client needs LinkedIn data today, e-commerce pricing tomorrow, and news monitoring next week. Each job requires different proxy pools, parsing logic, rate limits, and storage schemas. Without IaC, you’re manually reconfiguring servers, forgetting which settings worked last time, and introducing human error at every step.

In link-building and SEO data pipelines specifically, IaC solves three recurring problems. First, reproducibility: when a scraper breaks or gets blocked, you can tear down and rebuild the exact environment in minutes rather than debugging a snowflake server you configured six months ago. Second, scalability: processing 10,000 URLs versus 10 million means spinning up more workers, which IaC handles declaratively rather than through frantic manual provisioning. Third, compliance and audit trails: your configuration files document exactly which proxies, user agents, and rate limits you deployed, crucial when clients ask how data was collected.

Think of IaC as recipes for infrastructure. You write the recipe once, test it, version-control it, and execute it reliably whenever needed. For scraping teams juggling multiple clients and constantly shifting requirements, this transforms infrastructure from a bottleneck into a repeatable, documented process.

The Core Components You’ll Actually Use

Provisioning Your Scraping Nodes

Instead of manually spinning up cloud servers each time you need scraping capacity, define your infrastructure in version-controlled configuration files. Tools like Terraform, Pulumi, and AWS CloudFormation let you declare proxy servers, scraper instances, and storage buckets as code blocks that provision consistently across environments.

Start by defining your proxy layer—whether residential proxy pools, datacenter IPs, or rotating proxies—as reusable modules with parameters for region, rotation speed, and concurrency limits. Declare your scraper instances next, specifying CPU, memory, and auto-scaling triggers based on queue depth or CPU utilization. Configure storage resources (S3 buckets, databases, object stores) with lifecycle policies, access controls, and backup schedules written directly into your IaC templates.

This approach eliminates configuration drift, makes monitoring proxy infrastructure easier through consistent naming conventions, and lets you replicate entire scraping stacks in minutes for testing or geographic expansion. When a proxy provider changes or you need to migrate regions, update the code once and redeploy everywhere simultaneously.

Configuration Management That Scales

Infrastructure as Code lets you define scraper configuration in version-controlled files rather than SSH-ing into each instance to tweak settings. Tools like Ansible, Terraform, and Puppet read YAML or JSON files that specify rate limits, retry logic, target URLs, user-agent rotation schedules, and timeout thresholds, then apply those settings across your entire fleet in minutes.

For example, a single configuration file can encode proxy rotation intervals, request headers, and concurrency limits for fifty scrapers monitoring e-commerce sites. When a target site changes its rate limiting, you update one line in the config, commit to Git, and push the change to all instances automatically—no manual edits or missed servers.

This approach proves essential when scaling scraper fleets beyond a handful of machines. Declarative configs act as documentation showing exactly how each scraper behaves, making it straightforward for team members to understand settings without reading application code. Version control provides an audit trail of configuration changes, useful when debugging why scrapers started getting blocked after a recent update.

State management becomes critical here. Tools like Terraform track which servers currently run which configurations, detecting drift when someone makes manual changes. Automated drift correction pulls servers back to the declared state, preventing configuration inconsistencies that cause unpredictable scraper behavior across your infrastructure.

Wiring IaC Into Your CI/CD Pipeline

Testing Infrastructure Changes Before They Break Production

Testing infrastructure changes in isolation prevents downtime that costs both data and credibility. The core approach: replicate your production environment using IaC definitions, validate changes there, then promote only what passes.

Start with staging environments that mirror production topology—same proxy pools, scraper configurations, request rates, and data pipelines. Tools like Terraform workspaces or separate stack instances (dev, staging, prod) let you maintain identical infrastructure definitions with environment-specific variables. Deploy your proposed changes to staging first, run actual scraping jobs against live targets at production-like scale, and monitor for proxy bans, rate limit violations, or parser failures.

Automated testing catches configuration drift before deployment. Write validation scripts that check proxy rotation logic, verify resource quotas match your traffic patterns, and confirm scrapers handle edge cases (missing fields, changed selectors, authentication flows). Integrate these tests into your CI/CD pipeline so every infrastructure change triggers a test run. For example, if you’re adjusting proxy pool size or switching providers, staging reveals whether new IPs trigger CAPTCHAs or geographic blocks.

Use feature flags or canary deployments for risky changes—route 5% of scraping traffic through new configurations while monitoring error rates and data quality. Roll back instantly if metrics degrade. This iterative validation loop keeps production stable while you experiment with optimization strategies that actually improve scraper reliability.

Deployment Patterns That Minimize Downtime

Updating scraping infrastructure without pausing crawls requires deployment patterns that route traffic gradually. Blue-green deployments maintain two identical environments—one active (blue), one idle (green). You deploy changes to green, run smoke tests against a subset of target sites, then switch traffic over. If scrapers fail or proxy configurations break, you roll back instantly by redirecting to blue.

Canary releases work better for large scraping clusters. Deploy updated scrapers to 5-10% of nodes first, monitor error rates and success metrics for an hour, then incrementally roll out to the full fleet. This catches configuration errors (bad API keys, misconfigured rate limits) before they tank your entire operation.

For scraping specifically, test deployments against low-priority targets first—reference sites or internal test pages—before routing production traffic. Monitor queue depth, response times, and parse failures as leading indicators. If canary nodes show increased proxy bans or parsing errors, halt the rollout.

Infrastructure-as-code makes these patterns practical. Define blue and green environments in Terraform modules, swap them via load balancer rules. Use Ansible tags to deploy scrapers to canary groups first. Version your infrastructure configs alongside scraper code so rollbacks revert both simultaneously. The goal: infrastructure updates become routine maintenance, not high-stakes events that risk losing crawl progress or burning through proxy quotas during recovery.

When IaC Saves You From Scraping Disasters

IaC turns scraping disasters from multi-hour firefights into scripted recoveries. When a proxy provider bans your IP range mid-crawl, spinning up new proxy pools takes minutes instead of hours—your Terraform config deploys replacement infrastructure across different providers while your team focuses on the actual issue. One link-auditing team avoided a three-day project delay when AWS throttled their scraper fleet: they replicated the exact environment in another region using their IaC templates, identified a misconfigured rate-limiter in staging, and pushed the fix before the client noticed.

Rate-limit debugging becomes straightforward when you can spin up identical copies of production scrapers in isolated environments. Instead of guessing whether the issue stems from your code, proxy configuration, or request timing, you recreate the exact setup—same Python version, same proxy rotation logic, same request headers—and iterate without risking your live crawls. For researchers managing periodic data collection, IaC means reproducing last month’s scraper configuration precisely, ensuring consistency across datasets even when underlying libraries have updated.

Scaling presents the clearest win. During a 50,000-URL audit with a tight deadline, one team used their IaC scripts to deploy twenty parallel scraper instances across multiple cloud regions in under ten minutes. Each instance pulled work from a shared queue, respected domain-specific rate limits through centralized configuration, and reported results to the same database—all defined in version-controlled templates. When the job finished, they tore down the fleet with a single command, paying only for compute time used. Without IaC, provisioning that infrastructure manually would have consumed half the available project timeline.

Getting Started Without Overengineering

Start small and iterate. Begin by containerizing a single scraper with Docker—package your Python or Node.js script with dependencies, proxy configuration, and environment variables into one portable unit. This gives you reproducible deployments without worrying about server-level dependencies breaking between runs.

For: SEOs running scrapers on multiple machines who waste time debugging “works on my laptop” problems.

Next, add basic Terraform to spin up cloud instances on-demand. Write a simple .tf file that provisions one EC2 or DigitalOcean droplet, installs Docker, and runs your container. Store this file in Git alongside your scraper code. Now infrastructure changes become code reviews, not SSH sessions you forget to document.

Why it matters: Version-controlled infrastructure means you can recreate your entire scraping setup from scratch in minutes, not hours of manual clicking.

Prioritize automating proxy rotation configuration first—hardcoded proxy lists scattered across scripts create maintenance nightmares and credential leaks. Use environment variables or securing proxy credentials through config management. Defer complex monitoring dashboards and auto-scaling policies until you have real usage patterns to optimize against.

The trap: Don’t build a Kubernetes cluster for three scrapers. Start with a single Dockerfile, one Terraform file, and basic GitHub Actions for deployment. Add complexity only when manual processes become painful bottlenecks, not because infrastructure tutorials suggest it.

For: Link-builders and data analysts who need reliable scraping infrastructure without becoming DevOps engineers.

IaC isn’t DevOps theater—it’s how you build scraping infrastructure that rebuilds itself when proxies fail or scrapers break. Write your configuration once, deploy it hundreds of times without SSH-ing into servers at 2 AM. The real win: you analyze link graphs and content patterns instead of babysitting infrastructure. Repeatability means reliability, and reliability means you spend hours mining data, not troubleshooting deployment drift.